- HEPI Director, Nick Hillman, looks at the latest row on admissions to the University of Oxford.

In a speech on Friday, the Minister for Skills, Baroness Smith, strongly chastised her alma mater, the University of Oxford, for taking a third of their entrants from the 6% of kids that go to private schools.

In a section of the speech entitled ‘Challenging Oxford’, we were told the situation is ‘absurd’, ‘arcane’ and ‘can’t continue’:

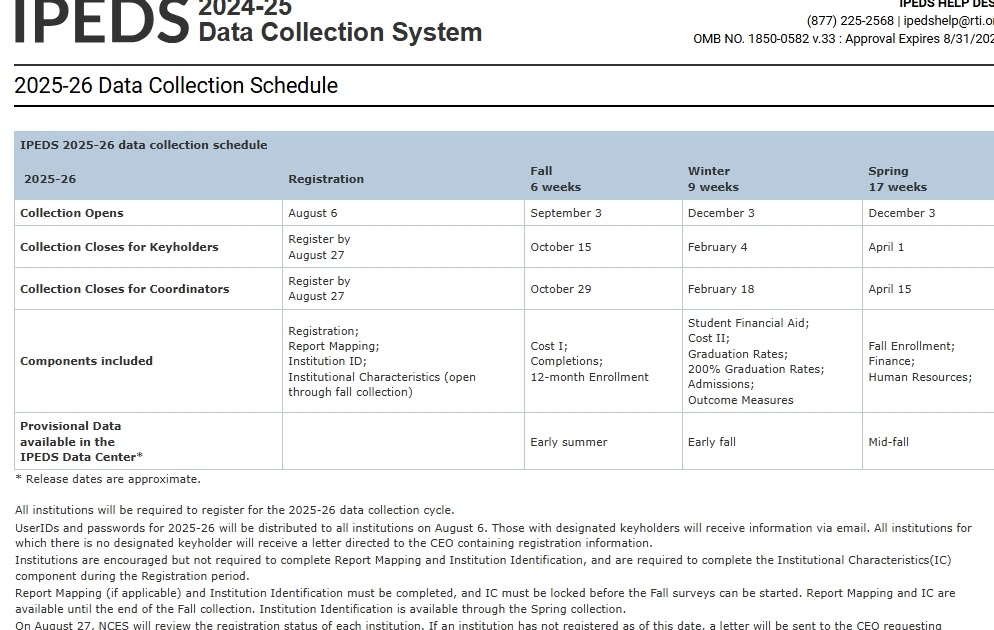

Oxford recently released their state school admissions data for 2024.

And the results were poor.

66.2% – the lowest entry rate since 2019.

I want to be clear, speaking at an Oxford college today, that this is unacceptable.

The university must do better.

The independent sector educates around 6% of school children in the UK.

But they make-up 33.8% of Oxford entrants.

Do you really think you’re finding the cream of the crop, if a third of your students come from 6% of the population?

It’s absurd.

Arcane, even.

And it can’t continue.

It’s because I care about Oxford and I understand the difference that it can make to people’s lives that I’m challenging you to do better. But it certainly isn’t only Oxford that has much further to go in ensuring access.

This language reminded me of the Laura Spence affair, which produced so much heat and so little light in the Blair / Brown years and which may even have set back sensible conversations on broadening access to selective higher education.

I wrote in a blog over the weekend that the Government are at risk of forgetting the benefit of education for education’s sake. That represents a political hole that Ministers should do everything to avoid as it could come to define them. Ill-thought through attacks on the most elite universities for their finely-grained admissions decisions represent a similar hole best avoided. Just imagine if the Minister had set out plans to tackle a really big access problem, like boys’ educational underachievement, instead. The Trump/Harvard spat is something any progressive government should seek to avoid, not copy.

The latest chastisement is poorly formed for at least three specific reasons: the 6% is wrong in this context; the 33.8% number does not tell us what people tend to think it does; and Oxford’s current position of not closely monitoring the state/independent split is actually in line with the regulator’s guidance.

- 6% represents only half the proportion (12%) of school leavers educated at independent schools. In other words, the 6% number is a snapshot for the proportion of all young people in private schools right now; it tells us nothing about those at the end of their schooling and on the cusp of higher education.

- The 33.8% number is unhelpful because 20%+ of Oxford’s new undergraduates hail from overseas and they are entirely ignored in the calculation. If you include the (over) one in five Oxford undergraduate entrants educated overseas, the proportion of Oxford’s intake that is made up of UK private school kids falls from from something like one-third to more like one-quarter. This matters in part because the number of international students at Oxford has grown, meaning there are fewer places for home students of all backgrounds. In 2024, Oxford admitted 100 more undergraduate students than in 2006, but there were 250 more international students – and consequently fewer Brits. We seem to be obsessed with the backgrounds of home students and, because we want their money, entirely uninterested in the backgrounds of international students.

- The Office for Students dislikes the state/private metric. This is because of the differences within these two categories: in other words, there are high-performing state schools and less high-performing independent schools. Last year, when the University of Cambridge said they planned to move away from a simplistic state/independent school target, John Blake, the Director of Fair Access and Participation at the Office for Students, confirmed to the BBC, ‘we do not require a target on the proportion of pupils from state schools entering a particular university.’ So universities have typically shied away from this measure in recent times. If Ministers think it is a key metric after all and if they really do wish to condemn individual institutions for their state/independent split, it would have made sense to have had a conversation with the Office for Students and to have encouraged them to put out new guidance first. At the moment, the Minister and the regulator are saying different things on an important issue of high media attention.

Are independently educated pupils overrepresented at Oxbridge? Quite possibly, but the Minister’s stick/schtick, while at one with the Government’s wider negative approach to independent schools, seems a sub-optimal way to engineer a conversation on the issue. Perhaps Whitehall wanted a headline more than it wanted to get under the skin of the issue?

we do not require a target on the proportion of pupils from state schools entering a particular university

John Blake, Director for Fair access and participation

![The Digital Twin: How to Connect and Enable Your Student Data for Outreach, Personalization, and Predictive Insights [Webinar]](https://blog.college-counseling.com/wp-content/uploads/2025/04/How-to-Unlock-Graduate-Enrollment-Growth-Webinar.webp.webp)