As the landscape of college athletics continues to shift, Temple University is experimenting with a new initiative that embeds academic research into the day-to-day operations of its athletics department.

Launched last month, the Athletic Innovation, Research and Education Lab formalizes a partnership between the School of Sport, Tourism and Hospitality Management (STHM) and Temple Athletics.

The AIRE Lab functions as both a research center and a practical hub, aiming to improve program management and student athletes’ development through evidence-based solutions.

Jonathan Howe, an assistant professor at STHM and AIRE Lab co-director, said supporting the student-athlete experience is especially important at an institution like Temple University, which has fewer resources for name, image and likeness and revenue sharing than larger schools.

“We’re able to engage in research and leverage university resources in a way that the athletics department may not traditionally be able to do,” Howe said.

Elizabeth Taylor, an associate professor at STHM and AIRE Lab co-director, emphasized the importance of data-driven decision-making.

“The folks who work in student athlete development may not have the capacity to do their full-time jobs while also staying up-to-date on the literature or evaluating the impact and effectiveness of the programs they offer,” Taylor said.

She added that the goal is to “connect with people on campus who are already doing this work and share resources instead of recreating the wheel or paying someone from outside the university.”

State of play: The launch of the AIRE Lab comes amid rapid changes in college athletics, including the rise of NIL compensation, evolving transfer rules and ongoing debates over athlete eligibility and governance. Taylor and Howe said these shifts have increased the need for institutions to understand how policy, culture and organizational decisions affect student athletes.

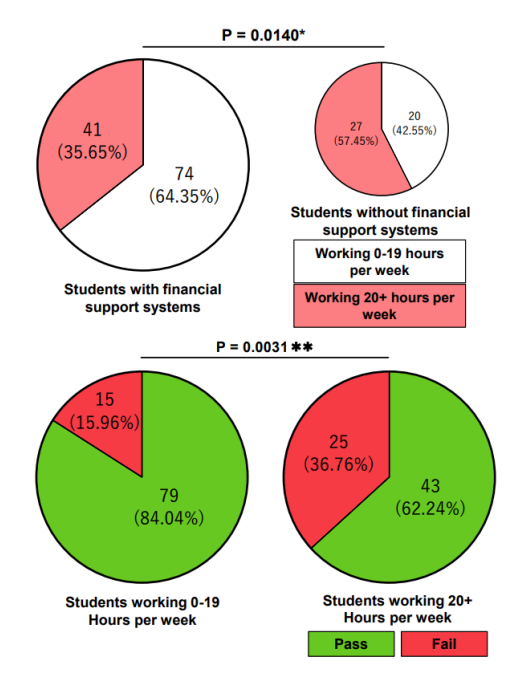

“The additional opportunities through NIL and revenue-sharing create more time demands on student athletes,” Taylor said, noting that potential brand deals can complicate efforts to balance practices and competitions with classes, extracurriculars and internships.

“What the research shows us is that they’re already strapped for time and what comes with that is stress, anxiety and mental health challenges,” she added.

Transfer rules can further complicate the student athlete experience, particularly for athletes arriving from other institutions, Howe said. “Navigating the academic setting is a lot for athletes who may be transferring in or may have a lucrative NIL deal, so academics may be put on the back burner,” he said.

To bridge the gap between research and daily operations, the athletics department appointed two staff members as lab practitioners to help translate research into practice.

“Everything is changing by the second, and student athletes are having to navigate these changes,” Howe said. “So how can we provide a system that identifies the most beneficial programming to help athletes be as successful as possible in their professional pursuits once they leave campus?”

In practice: One of the lab’s first initiatives was a cooking demonstration held at Temple University’s public health school. The session was designed to help student athletes learn how to prepare simple, nutritious meals.

Taylor said the goal was to encourage student athletes to make practical, healthy choices and develop skills they can use outside of structured team meals.

“The idea behind the cooking demonstration came from a research article on the experiences of college athletes, and one of the things that the athletes talked about is how so much of their life is planned out for them,” said Taylor. She added that while what student athletes eat and how they work out is often prescribed, they aren’t necessarily taught why they’re eating certain foods or doing specific workouts in the weight room.

“It was a great experience for them to learn more about cooking safely and making healthy meals,” she added, noting that over 20 student athletes participated in the session.

What’s next: Looking ahead, Howe said he hopes the lab will serve as a model for other institutions seeking to better integrate research, student athlete well-being and athletics administration.

“We want to continue leveraging institutional, federal and state resources to provide athletes with opportunities they normally wouldn’t get, especially at a time when higher education budgets are being cut,” Howe said.

“For me, the AIRE Lab allows us to break down some of the long-standing barriers we’ve had at the higher education level. Just because the budget is cut doesn’t mean we have to eliminate programs,” he said.

Get more content like this directly to your inbox. Subscribe here.